This Google Feature Accidentally Created a Scammer's Paradise

How "AI Price Check' is training business owners to blindly trust the next generation of vishing

Google’s is calling businesses to check prices on behalf of real customers. It sounds helpful… until you realize we’re training every business owner in America to trust robotic voices asking detailed questions about their operations.

I first started thinking about this back in May while I was at DefCon (a large yearly hacking/cyber security conference in Vegas) watching one of my favorite events: the Vishing Competition.

If you don’t know what vishing is, it’s short for voice phishing. So, instead of hackers sending you a suspicious link via email, they call you on the phone. The callers will attempt to manipulate you out of sensitive information like passwords, account access, or company data.

Here is an example of vishing that went viral on Instagram a few times:

The vising competition at DefCon is so much fun to watch. Contestants stand in soundproof booths in front of a live audience and cold-call real businesses, using carefully crafted pretexts (scenarios) to see how much information they can extract. Things like: employee names, what software is being used, physical security details… info that could be useful in a real attack.

It’s important to note: this DefCon competition is an example of ethical hacking. The purpose isn’t exploitation, this is an approved DefCon educational event.

The pretexts can vary wildly. Things like: someone pretending to be from IT support, a nervous young delivery driver’s first day on the job, or an inquisitive prospective client.

But one pretext in particular caught my attention:

A contestant called a business pretending to be an automated robot caller conducting a site audit on behalf of the company’s HQ. The contestant leaned hard into the robot character, uncanny pauses, awkward phrasing, and a weird chatbot-esque cadence. It worked beautifully. The person on the other line didn’t hesitate, they assumed it was a legitimate audit from HQ and started answering questions freely.

That got me thinking:

This has the potential to be a massive vishing vector in 2026.

And then, just a few months later, Google rolled out a feature that validated (and escalated) every concern I had.

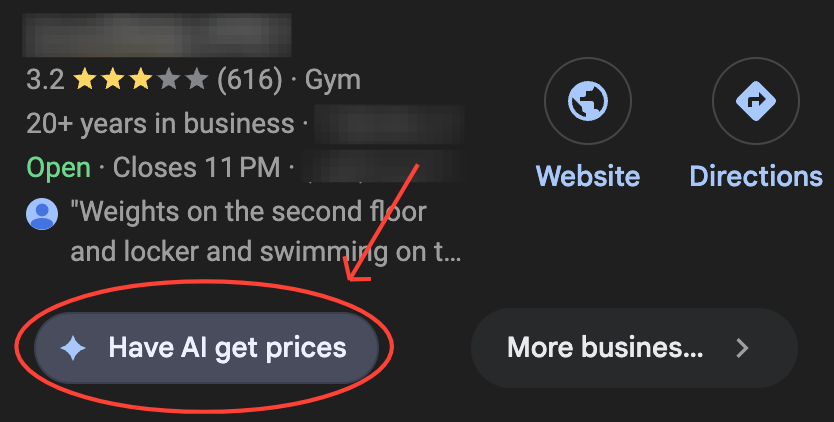

Google’s AI Price Check Feature

In late 2025, Google launched something called ‘Have AI Check Prices.’ This feature tasks an AI agent with calling local businesses to get info (pricing, services etc.) on behalf of real customers. The user requesting the data first answers a few questions, then Google’s AI agents get to work.

First, they crawl the sites of local businesses looking for answers. If they can’t find clear info, the agent calls the business directly. And finally, Google compiles all the info it was able to find into a comparison report for the prospect.

The growing popularity of this feature, has already started reshaping how businesses operate online. SEO advisors, marketing consultants, myself included, are now instructing business owners to be ready for these AI calls.

And it’s not just a price check feature. Google’s AI calling capabilities extend to making restaurant reservations, confirming appointments, and verifying business hours. And all of these features use automated AI voice call technology.

The infrastructure for AI-to-business phone communication is becoming ubiquitous.

And here’s what I’m concerned about… we’re creating the perfect social engineering playground!

We are directly conditioning business owners to expect AI voice calls asking detailed questions about their business. We’re training them to respond quickly and thoroughly because every call could be a qualified lead. And we’re doing this at scale, across every service-based industry.

We’re effectively gifting an attack surface to scammers.

A Vishing Playground

Traditional phishing relies on creating urgency, confusion, and trust to extract sensitive info. Email phishing has become harder as people have become wiser to the red flags. Grammatical errors, suspicious sender addresses, weird links, we’ve been (mostly) trained to spot these.

Voice phishing is different.

Imaging you run a small business. You’re getting 3-4 legitimate AI calls a week from Google. You’ve trained your staff to handle these calls professionally because each one could represent real revenue. You’re thinking “speed to lead matters.”

Now an AI agent scammer calls.

The voice sounds identical to the Google AI you talked to yesterday. It follows the same opening script and asks questions about your services. But then it pivots: “To complete the integration with Google’s new booking system, I need to verify your Google Business Profile Credentials” or “There’s a discrepancy in your payment information. Can you confirm the last four digits of the business account on file?”

Your staff member, already primed to be helpful to AI callers, doesn’t even hesitate.

As these AI calls proliferate, we’re creating muscle memory around complying with AI automated calls asking business questions. We’re teaching people to trust the format.

The Scalability of AI Vishing

Traditional vishing required human labor. A singular person could maybe run 20-30 calls a day. That’s the bottleneck.

But AI-based vishing is not limited by human bandwidth. You can spin up hundreds, even thousands of concurrent calls with the same AI voice, and the same social engineering scripts. You could be calling every business in a metropolitan area simultaneously. All running remotely from anywhere in the world. No call center needed. No humans required.

The economics of fraud are changing.

For the cost of API calls, and a decent voice cloning model, you can target every small business owner in America who’s desperate not to miss a lead. And because these calls can sound identical to legitimate AI service calls, the success rate might be higher than traditional vishing or email phishing.

This is industrialized social engineering at a scale we’ve never experienced.

What Legitimate AI Callers Won’t Ask

If we’re going to navigate this, business owners and their staff need to know the boundaries:

Legit AI calling services will only ask about information that should reasonably be publically available:

service offerings and descriptions

pricing and package details

availability and scheduling

business hoursservice area or location coverage

They should NEVER ask for:

login credentials for any platform

banking info or routing numbers

SSNs or EINs

access to your systems or CRM

personal info about employees or customers

verification codes sent to your phone

permission to ‘integrate’ or ‘sync’ accounts

Honestly, this is a good rule of thumb for ANY phone call, not just AI ones. Never give out sensitive information over the phone, especially if you, yourself, didn’t initiate the call to a verified number.

But will people remember this in the moment? Especially when they’re already used to being helpful to AI callers. When missing a lead could mean missing rent?

I’m worried this desperation may create an ideal attack surface.

Where This Could Lead

I keep thinking about what happens if these attacks start working at scale.

Imagine a few high-profile breaches make the news. Maybe a restaurant chain loses access to their reservation system. A dental practice gets their patient database compromised. Small businesses across a city report similar AI caller scams, all asking for “verification” credentials that turned out to be vishing attempts.

How do business owners react? Do they start treating every AI call with suspicion? Hanging up on legitimate Google price checks because they can’t tell the difference anymore? And what happens to the businesses that do that, the ones who protect themselves by not engaging, do they just disappear from AI-generated recommendation lists?

I wonder if we’ll see a trust erosion cycle similar to what happened with “Microsoft Support” scams. Those became so prevalent that people stopped trusting actual Microsoft support calls. But this time, it wouldn’t just affect one company’s support line. It would affect the entire emerging ecosystem of AI-assisted commerce.

Maybe we’ll develop better verification protocols before it gets that bad. Maybe AI calling services will implement authentication standards that make impersonation harder. Or maybe we’ll just muddle through, with businesses trying to navigate an increasingly murky landscape where helpful automation and malicious exploitation can look the same.

I don’t have the answer. But I do know companies are releasing new features faster than the public is thinking through the consequences.

And in the gap between innovation and adaptation, cybersecurity awareness is definitely NOT optional.

For business owners, or for anyone with a connection to the internet and something to lose, understanding these threats is not something you can delegate or ignore. Every person on your staff may unsuspectingly answer one of these phone calls. Everyone has to be trained and aware.

The hackers are counting on you not knowing. Don’t give them that advantage.

Tech Glossary

Phishing — A type of cyberattack where scammers impersonate trusted entities (like banks, companies, or services) through email, text, websites, or calls to trick people into revealing sensitive information such as passwords, credit card numbers, or account credentials. The term comes from "fishing" because attackers are "fishing" for your data by using fake bait (legitimate-looking messages).

Social Engineering — A manipulation technique that exploits human psychology rather than technical vulnerabilities. Attackers use trust, urgency, fear, or authority to trick people into doing risky things like sharing passwords or clicking malicious links.

Vishing (Voice Phishing) — A type of social engineering attack conducted over the phone, where scammers use voice calls to trick people into revealing sensitive information. The voice equivalent of email phishing.

DefCon — One of the world’s largest and most well-known hacking conferences, held annually in Las Vegas. It features talks, workshops, and competitions focused on cybersecurity, hacking techniques, and information security.

Pretext — A fabricated scenario or false identity used in social engineering attacks to build trust and manipulate targets. Examples include pretending to be IT support, a vendor, or an automated system.

Agentic AI — AI systems that can take actions on behalf of users, like making phone calls, booking appointments, or comparing prices, rather than just answering questions. They act as autonomous agents with specific goals.

Attack Surface — All the points where an unauthorized user could potentially enter a system or extract data. Every new feature or integration is a potential new attack surface.

Attack Vector — The specific path or method an attacker uses to gain unauthorized access to a system. In this article, the attack vector is AI calls that mimic legitimate automated services.

Trust Erosion Cycle — A pattern where security breaches lead to decreased trust, which leads to people avoiding legitimate services.

API (Application Programming Interface) — A set of rules and protocols that allows different software systems to communicate with each other. AI services use APIs to make calls, extract data, and integrate with other platforms.

this is crazy and ridiculous. i feel like i read about cyberattacks using AI and how they used something like this to get into like important shit, like our water processing plants! I will find something, but time limit at the moment. found this though...

UAE reports cyberattacks targeting national digital infrastructure

https://ground.news/article/uae-foils-cyber-attacks-state-news-agency-says_92e2f6?utm_source=mobile-app&utm_medium=newsroom-share